If you've spent any time integrating a bioreactor control system with an external analytics platform, you already know that "it supports OPC-UA" is not the end of the conversation. It's the beginning. The address space structure, subscription configuration, authentication method, and how each vendor implements the specification all matter, and they all vary in ways that are rarely documented in the manual you actually received.

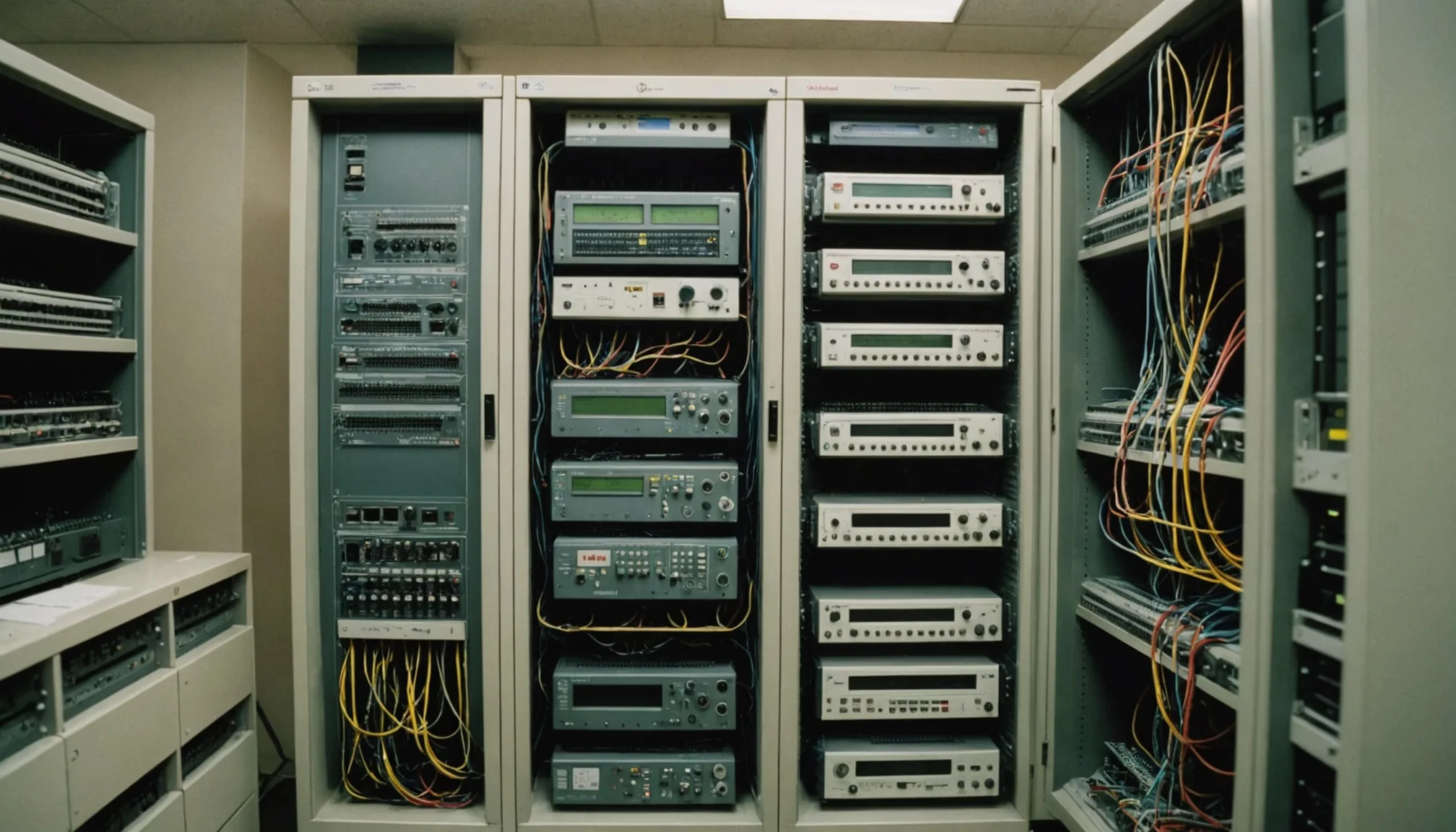

What OPC-UA Address Space Modeling Looks Like in Practice

OPC-UA's information model is hierarchical: nodes live in an address space, organized into objects, variables, and methods. For a bioreactor, the typical hierarchy mirrors the physical equipment: a vessel object contains sensor variables for dissolved oxygen (DO), pH, temperature, agitation rate, and feed pump flow. On a well-structured implementation like Sartorius BIOSTAT control nodes, you'll find a clean object tree where each sensor variable carries the right data type, engineering unit metadata, and timestamp. Not every vendor delivers that out of the box.

In our experience connecting to Applikon systems, the address space is flatter, with variable node IDs that follow a numeric scheme rather than descriptive browse names. You can still map them, but you need the vendor's OPC-UA configuration sheet alongside the subscription setup, or you're guessing which NodeId corresponds to which physical sensor. Eppendorf BioFlo controllers vary by firmware version: systems shipped before 2022 often expose dissolved oxygen and pH on different object paths than the current builds, which matters when you're building a mapping table that has to survive a control system firmware update.

New Brunswick (now Eppendorf) legacy units are a category of their own. Some controllers expose process data via OPC-DA rather than OPC-UA, which means you're working through a DA-to-UA wrapper. That wrapper introduces latency. Plan for it.

Deadband and Sampling Rate: The Trade-Off That Costs Batches

OPC-UA subscriptions give you two mechanisms for controlling data volume: sampling rate (how often the server checks the monitored item) and deadband (the minimum change threshold before a value change notification is queued). These two parameters interact, and the wrong combination leads to silent data gaps that don't surface until a batch deviation investigation.

For DO and pH, we typically configure sampling at 5-second intervals with an absolute deadband of 0.5% saturation and 0.02 pH units respectively. Tighter than that and you're flooding the historian with noise. Looser than that, and a developing oxygen transfer limitation can drift 3 percentage points before your subscription delivers the change event. That 3% is enough to matter for a high-cell-density fed-batch. Not alarming on the control dashboard, but meaningful for a golden batch trajectory comparison running against historical data.

Temperature and agitation are more forgiving on deadband because their dynamics are slower, but their sampling rate still matters for time-series alignment. If temperature is sampled at 30-second intervals while DO is sampled at 5 seconds, your multivariate analysis is running on asynchronous timestamps. Most analytics pipelines handle this with linear interpolation, but you should know it's happening. The interpolation introduces a small bias into any correlation analysis between temperature and DO recovery after a fed-batch pulse.

Fact: in a 96-hour fed-batch run with a 5-second DO sampling rate and a properly configured deadband, you typically collect around 60,000 to 70,000 DO data points per vessel. Compare that to a 30-second sampling rate with no deadband: roughly 11,500 points, but with significant noise. Neither extreme is right. Find the middle.

Authentication: Certificates vs. Username Tokens

OPC-UA defines four security modes: None, Sign, Sign and Encrypt with either username/password tokens or X.509 certificates. Pharmaceutical manufacturing environments typically require at minimum Sign and Encrypt, because the raw data flowing over the connection feeds into 21 CFR Part 11 audit trails. That's a compliance boundary you don't want to blur.

Certificate-based authentication is the correct long-term approach, but it introduces operational overhead. Each client application that connects to the bioreactor OPC-UA server needs its certificate registered and trusted in the server's trust list. When you're connecting four bioreactors across two control system vendors and a data gateway, that's eight to twelve certificates to provision, rotate on expiry, and track. On a DeltaV system, certificate management goes through the DeltaV certificate store. On a standalone PLC running the Kepware OPC-UA server stack, it goes through Kepware's configuration console. Neither workflow is especially painful, but neither is automatic.

Username/password tokens are simpler to deploy and adequate for isolated local networks that are already separated from external access by network architecture. Honest assessment: most CDMOs running fewer than 20 bioreactors use username tokens internally and rely on network segmentation rather than certificate management. That's a legitimate operational choice, provided the network segmentation is actually maintained and documented.

One practical note on certificate validity periods. OPC-UA server implementations commonly default to one-year certificate validity. In our tracking of integration incidents, expired server certificates are one of the top causes of silent subscription drops, where the client and server connection terminates gracefully on certificate expiry but the historian simply stops receiving data without generating a hard alarm. Set calendar reminders for certificate rotation 30 days before expiry. Simple, but it saves batch records.

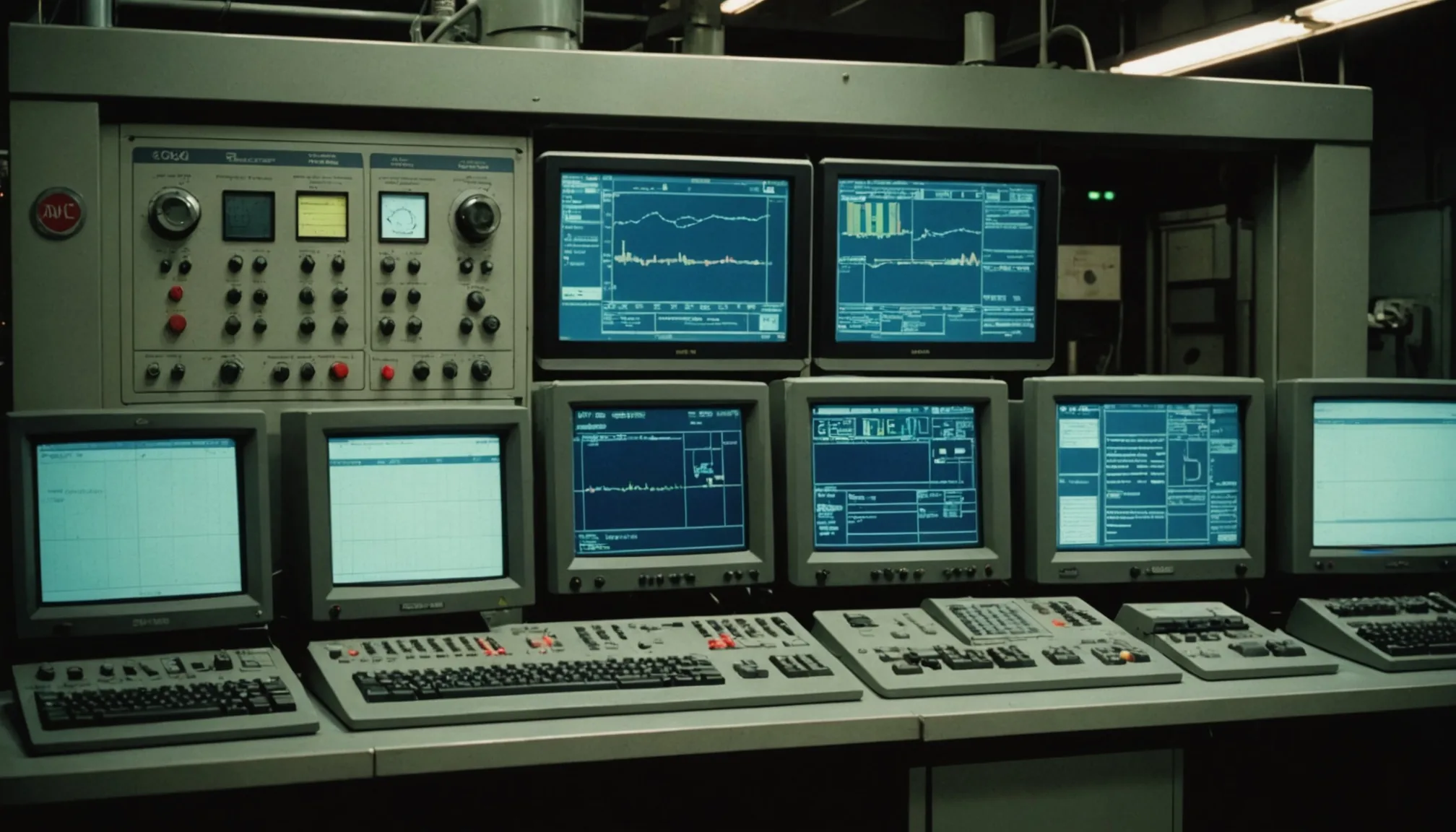

Aggregated Subscriptions vs. Raw Subscriptions

OPC-UA supports both raw data subscriptions (every sampled value delivered as a change notification) and historical aggregate access via the HistoricalDataAccess (HDA) service set. For real-time deviation detection, you want raw subscriptions. For retrospective analysis against a historian archive, aggregated HDA queries are more efficient.

The practical separation is this: live process monitoring connects via raw subscriptions with the sampling and deadband parameters discussed above. Batch post-analysis and retrospective model training pull from the historian via HDA aggregate queries, requesting time-weighted averages over 1-minute or 5-minute windows to normalize the sample density for the modeling pipeline.

Where things go wrong is when engineers conflate the two pathways. Raw subscription data is not the same as historian-stored data if the historian applies its own compression algorithm on ingestion, which DeltaV and most third-party historians do. The historian's compression deadband may differ from your OPC-UA subscription deadband, meaning the archived record has different temporal resolution than the live stream your analytics engine processed in real time. When you're comparing a live batch trajectory against historical golden batch data pulled from the historian, you need to normalize both to the same sampling interval before the comparison is meaningful.

Vendor Quirks Worth Knowing Before You Start

Sartorius BIOSTAT systems with the current firmware implement OPC-UA well by pharmaceutical equipment standards. Address space is organized, data types are clean, and the server supports Security Mode Sign and Encrypt without special configuration. The one consistent issue we've seen is that the server's published sampling rate for fast-changing variables (particularly DO during sparging events) can underperform the configured rate under high load. If you're also streaming from CAN bus sensors on the same controller, buffer the OPC-UA connection on a separate network interface.

Applikon appliFlex and appliSens controllers vary by generation. The newer appliFlex units have a well-implemented OPC-UA server. Older appliSens controllers sometimes expose an incomplete node set, omitting the feed pump flow variable from the default address space. That variable exists on the controller, but you have to explicitly request it be added to the OPC-UA configuration. This is a setup step that happens once and is easy to miss if you're commissioning off the default export from the Applikon configuration tool.

Eppendorf BioFlo 320 and 720 units are generally solid on OPC-UA, but the timestamp behavior differs from Sartorius. Eppendorf timestamps reflect the server's read time, not the sensor measurement time. For most analyses, the difference is under 100 ms and irrelevant. For precise time-sync work across multiple bioreactors where you're correlating events across vessels, that offset accumulates and requires correction against a GPS-synchronized or NTP-synchronized time reference.

Time synchronization matters more than most integration guides acknowledge. Seriously. When you're correlating process events across four bioreactors on a shared production floor, a 3-second timestamp drift between OPC-UA servers on different controllers produces visible artifacts in multivariate cross-vessel analysis. NTP synchronization across all control system nodes to a shared time server should be a commissioning requirement, not an afterthought.

What This Means for Your Integration Project

The work of connecting bioreactor data to an analytics platform is not primarily a software problem. It's a configuration and commissioning problem. The OPC-UA specification is well-defined. The vendor implementations are imperfect in known, documented ways. The gaps between them are bridgeable, but only if you've mapped the address space against actual controller output, validated the sampling configuration against real sensor behavior, and confirmed that timestamps align before you start training any model on the data.

We built our own OPC-UA integration layer against DeltaV and Siemens SIMATIC PCS 7 systems specifically because we needed those validations to be part of the commissioning process, not discovered six batches in. The address space map, the subscription configuration parameters, and the timestamp correction offsets all live in the integration configuration layer. Engineers can inspect them. That transparency matters when a batch deviation triggers a CAPA investigation and the first question is whether the data pipeline was performing correctly during the affected run.

Evaluating OPC-UA integration for your bioreactor fleet? Talk to our team about how Fermentile's data pipeline layer handles address space mapping and subscription configuration across Sartorius, Applikon, and Emerson DeltaV environments.