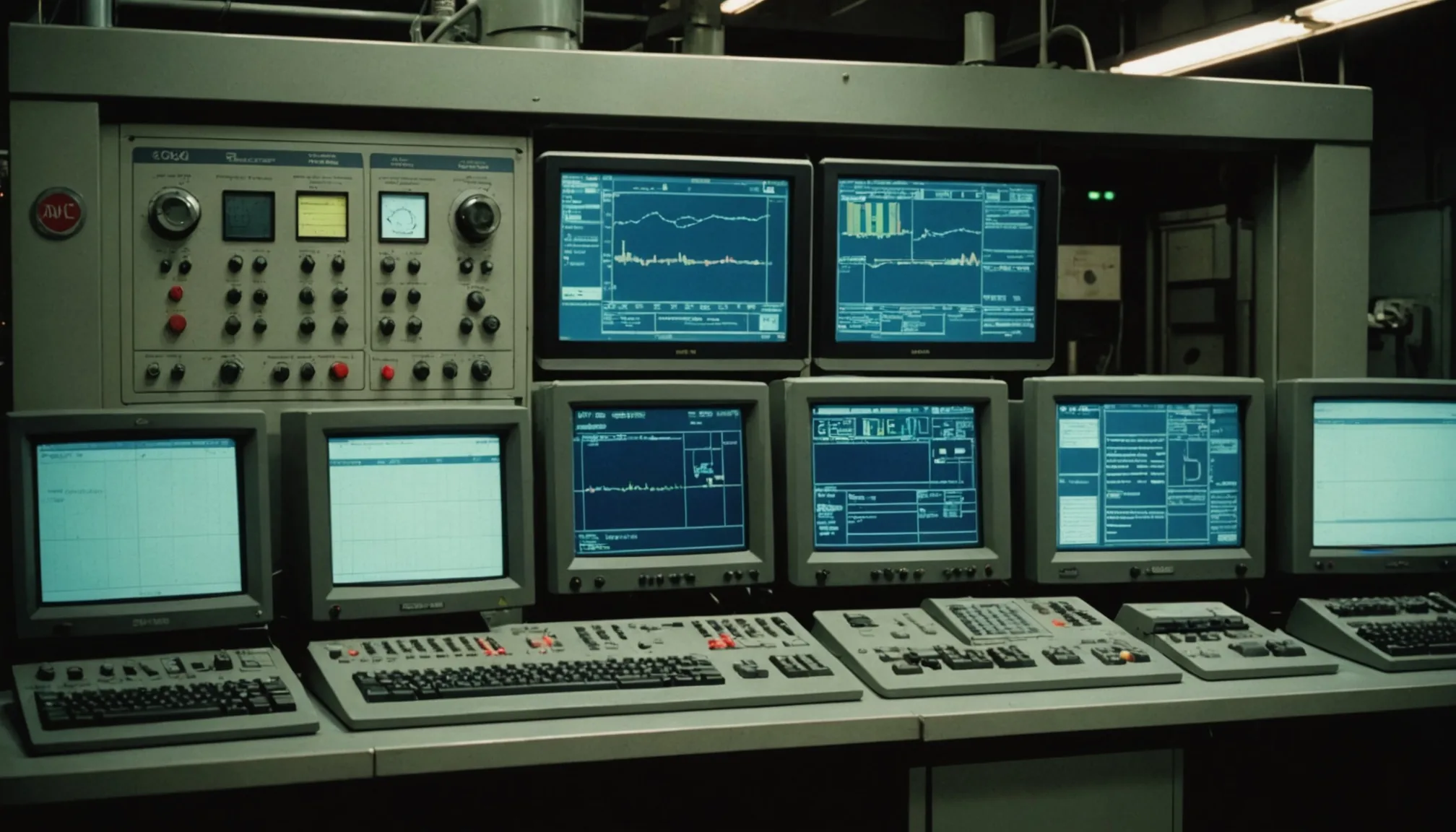

Emerson DeltaV historian stores every process variable from a fermentation run, but extracting actionable insight from that archive requires more than trend queries. We've seen CDMOs sit on two years of perfectly time-stamped pH, DO, and agitation data and still fail to answer basic questions about why Batch 47 outperformed everything else. The data exists. The tooling gap is real.

Tag Naming Conventions: The Foundation Nobody Agrees On

Start here before any analytics conversation. DeltaV historian tags inherit from the module-level naming hierarchy in Control Studio, which means a tag path like UNIT_A/FB_BIOREACTOR_01/AI_DO_PV is both perfectly sensible and completely non-portable across sites. The moment a second manufacturing site comes online, tag names diverge unless there was a site-wide naming standard baked in from day one.

In our experience, roughly 60% of multi-site CDMOs have inconsistent tag naming across facilities. Not wildly different. Just different enough that SQL or PI AF queries written for Site A need manual editing before they run on Site B. The fix is not technically complex: agree on a three-level hierarchy (site prefix, unit class, signal descriptor), document it in a master tag register, and enforce it during DCS commissioning. The hard part is organizational, not technical.

One practical shortcut: use ISA-88 equipment module naming as the middle tier. If your DeltaV modules already follow ISA-88 phase logic, you're halfway there. The signal descriptor level then standardizes around a controlled vocabulary: PV for process value, SP for setpoint, OP for output, MODE for module mode. Simple. Works across vendors too, which matters when OSIsoft PI is sitting downstream aggregating from multiple DCS systems on the same campus.

Deadband Configuration: The Trade-Off Nobody Tells You About

DeltaV historian has two compression settings that directly control how much data actually gets written: exception deviation (deadband) and compression deviation. Exception deviation is the first gate: if a value does not change by more than X% of span, no new record is written. Compression deviation is the second: after the exception filter, the swinging-door algorithm further reduces storage by approximating linear segments.

For a typical bioreactor temperature loop with a 2-degree setpoint, setting exception deviation at 0.05 degrees and compression deviation at 0.1 degrees is reasonable during stable phases. But during exponential growth or pH control excursions, that same configuration will flatten the response curve in the historian. You lose the dynamics. Exactly the data you need for deviation root-cause analysis gets smoothed away.

Our recommendation: use time-based archiving for critical analyte tags (DO, pH, CO2 off-gas) during active process phases. Fixed-interval at 5 to 10 seconds costs about 3 MB per tag per day. For a 30-tag bioreactor with a 14-day fed-batch run, that is under 1.5 GB total. Storage is cheap. Missed dynamics are expensive.

There is also a batch mode consideration. Deadband configuration that makes sense for continuous monitoring is wrong for batch logging. More on that below.

Batch vs. Continuous Logging: Different Goals, Different Configs

This distinction trips up a surprising number of teams. Continuous logging optimizes for long-term trend storage, minimal redundancy, and manageable database growth. Batch logging has a different contract: every record from inoculation to harvest must be complete, integrity-checked, and attributable to a specific batch ID.

DeltaV's batch historian uses event-framed archiving linked to S88 batch records. When a Phase starts, the historian begins writing all configured tags for that Phase at the configured scan rate. When the Phase completes, it stops. Clean event frames. Queryable by batch ID, phase name, equipment ID. This is what feeds batch genealogy and enables golden-batch analysis.

The failure mode we see most often: teams configure DeltaV historian as if it were PI only, with exception reporting and heavy compression. They then try to do batch comparisons and find that two batches sampled at different compression intervals produce non-aligned time series. Trying to overlay DO profiles for 12 runs when each run was compressed differently is frustrating work. Align the scan rates first. Standardize compression settings per phase class. Then the analytics layer actually works.

PI AF for Bioreactor Hierarchies

OSIsoft PI Asset Framework is where the real analytics power lives, but it requires deliberate setup. PI AF is essentially a relational layer on top of the PI Data Archive time-series store. You define asset templates: a Bioreactor template with child elements for Agitator, pH Loop, DO Control, Gas Mix, and so on. Each element has attribute references pointing back to specific PI tags. Once built, you query by asset, not by tag name.

For a CDMO running 12 bioreactors across two scales (200L and 2000L), a proper PI AF hierarchy means you can write a PI Data Link formula that calculates average DO during exponential phase across all 2000L vessels over the last 90 days, in about 15 seconds. Without AF, that same query requires knowing 12 specific tag names, building a manual pivot, and hoping the naming was consistent. Spoiler: it usually was not.

Build the template once. Stamp it N times for N vessels. Map attributes to tags via the PI AF tag reference syntax. Use element relative references so that attribute formulas travel with the template rather than being hardcoded to a specific unit. This is the configuration that makes scale-up comparisons tractable.

One AF-specific pitfall: avoid building deep attribute hierarchies beyond 4 levels. PI System Explorer performance degrades noticeably above 5 levels on large databases, and it forces downstream Python or R queries to handle long, brittle attribute paths. Keep it flat. Four levels is enough for any bioreactor setup we've worked with.

Querying Strategies for Golden-Batch Training Data

The goal is a clean training set: N batches identified as golden by process development, time-aligned to a common phase reference point, and exported as a consistent multi-variate array. This is harder than it sounds.

Phase reference alignment is the first challenge. Batch A was 72 hours long. Batch B was 78 hours. You cannot simply align on wall-clock time. Align on process phase. If your DeltaV batch records distinguish Inoculation, Growth Phase 1, and Exponential Phase, use phase start as the reference zero for each batch. PI AF event frames preserve this naturally if your batch records were properly linked.

Tag selection is the second challenge. Do not pull every tag. We've found that starting with 8 to 12 core analyte and control tags produces better ML model performance than pulling 80 tags with heavy inter-correlation. The 80-tag pull creates collinearity problems and inflates the feature set with redundant control signals. pH PV, pH SP, DO PV, DO SP, agitation rate, temperature, base addition rate, feed rate, CO2 off-gas, and dissolved CO2 covers most fed-batch use cases. Ten tags. That's your starting point.

Resampling is the third. Interpolate all tags to a common 1-minute or 5-minute interval using PI's linear interpolation. PI OLEDB or the PI Web API both expose this natively. Do the resampling in PI, not in pandas. Faster. Fewer edge cases. Less data movement.

Export Patterns: Python, R, and Cloud Pipelines

Three patterns we see working in production at CDMOs today.

| Pattern | Stack | Best For | Watch Out For |

|---|---|---|---|

| PI Web API + Python requests | Python, PI Web API 2019+ | Ad hoc batch pulls, rapid prototyping | Kerberos auth in domain environments; bulk pulls throttle at 150K row default limit |

| PIconnect (Python library) | Python, PI SDK (Windows only) | Analysts on Windows with PI client installed | SDK dependency makes containerization difficult; not viable for Linux-based cloud infra |

| PI Integrator for Business Intelligence | PI Integrator, Azure Data Lake / Snowflake | Continuous streaming to cloud data warehouse for ML pipelines | Licensing cost; requires IT involvement; latency typically 5-15 minutes, not real-time |

For R users, the RPIAF package works against PI Web API directly. Same authentication considerations apply. The tidyverse workflow maps naturally onto PI Web API's batch-retrieved interpolated data format.

One observation from our own data work: the move to cloud is slower in regulated bioprocessing than the industry press suggests. As of early 2026, most CDMOs we interact with are still doing batch analytics on-premise, with cloud being a future-state aspiration rather than an active deployment. The barrier is not technical. It is data governance: 21 CFR Part 11 compliance, audit trail requirements, and the question of whether a cloud-resident data lake counts as a validated system under FDA guidance.

Integration Pitfalls: The Three We See Repeatedly

First: DeltaV to PI interface configuration. DeltaV uses the OPC-DA or OPC-UA interface to push to PI. The PI Interface for OPC-DA is long-lived but has documented scan-rate limitations above 2,000 tags per interface node. If a large DeltaV system has 8,000 process tags and a single interface node, the historian will fall behind during high-event periods like batch transitions and control mode changes. Symptom: PI shows data gaps of 15 to 30 seconds during phase changes. Fix: split across multiple interface nodes or migrate to PI-to-PI interface architecture.

Second: time synchronization. DeltaV and PI must share a time source. If DeltaV server clock drifts relative to the PI server by more than 2 seconds, event-frame boundaries become unreliable. We have seen batch phase durations appear 4 minutes shorter or longer than DCS records show, purely from NTP misconfiguration. Fix: validate NTP sync as part of historian commissioning qualification. Not just it is configured. Actually measured, with a logged delta.

Third: PI trust table misconfigurations in locked-down OT networks. The PI server's default trust table behavior requires the interface node's machine name to resolve correctly via DNS in the PI server's trust table. In air-gapped OT environments where DNS is not managed, interface nodes show connected in PI SMT but data stops flowing silently. The PI message log will show authentication warnings but the interface status light stays green. Check the PI Interface Diagnostics tool, not just the interface status. Green light means nothing here.

In our experience, about 40% of DeltaV-to-PI integration issues trace back to one of these three root causes. They are not exotic. They are boring infrastructure problems that show up consistently. Know them before you troubleshoot for two days.

Closing Thought

Process data at CDMOs is genuinely valuable. The challenge is not collecting it. DeltaV has been collecting it reliably for years. The challenge is building the configuration and query infrastructure that makes it interrogable. Tag naming that survives site expansion. Deadband settings tuned to the analytical question, not just storage optimization. PI AF hierarchies that abstract away tag naming entirely. Export patterns that fit the actual compute environment.

That is the work. Unglamorous. Difficult. And absolutely worth doing before anyone installs a machine learning platform on top of an unreliable data foundation.

Want to see how Fermentile connects your DeltaV and PI historian data to production-ready batch analytics? Request a demo.